Movement Intelligence for Physical AI

We capture the physics of human motion at population scale the Ground Truth layer for humanoid robotics, world models, and predictive health. OWN is proud to be part of the NVIDIA Inception program, supercharging the development of next-gen edge AI and real-world motion datasets that shape tomorrow.

Vision

Research in Sensing and Embodiment Lab (RISE)

OWN RISE Lab pioneers Movement Intelligence unlocking the physics of human motion to power embodied AI. Every step captures rich, real world data on gait dynamics, terrain adaptation, and physiological signals. We bridge human biomechanics to robotics, enabling safer, more adaptive systems for the physical world.

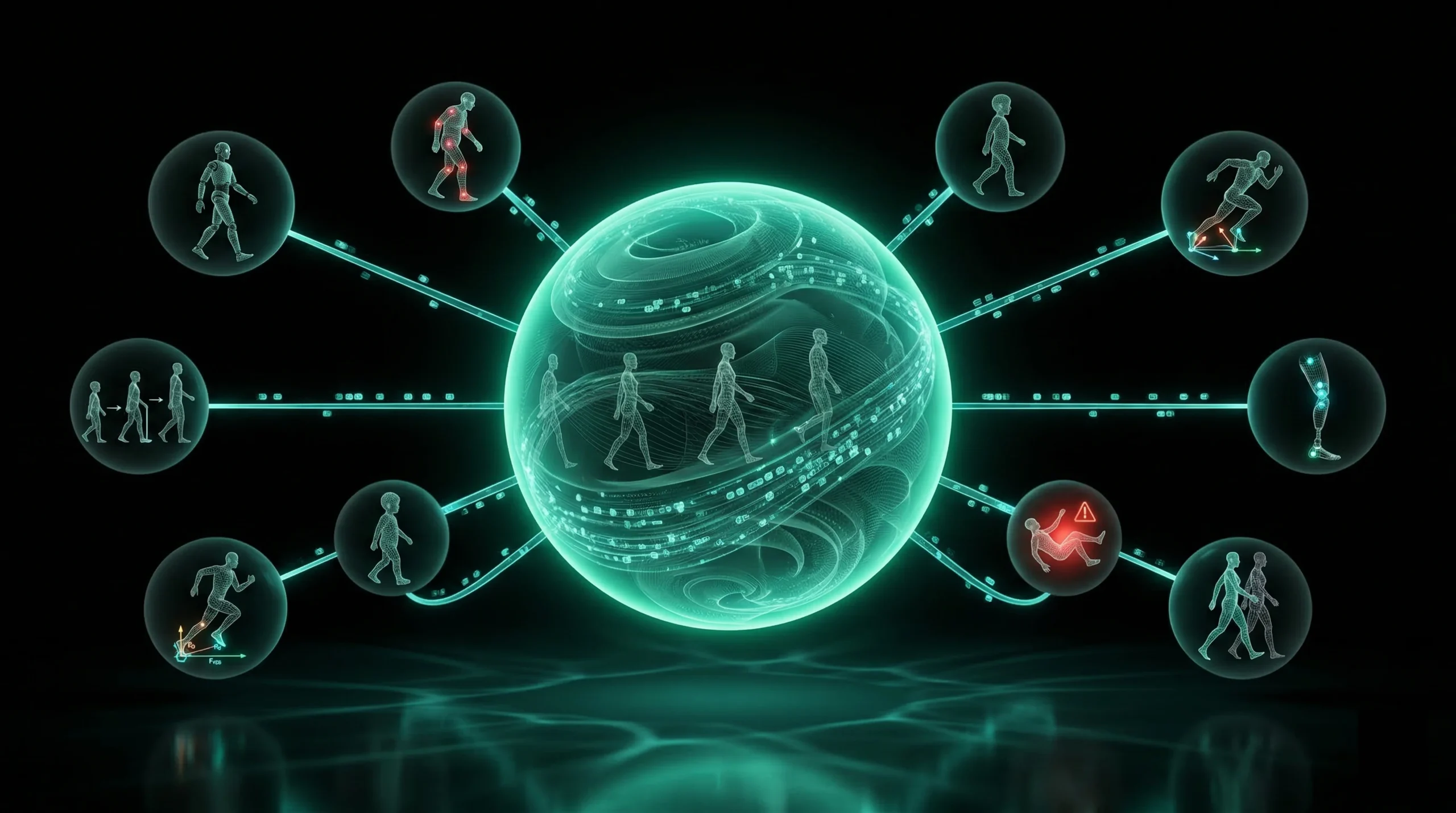

Foundation Models for Human Movement

- LLMs learned language by tokenizing text. Physical AI will learn movement by tokenizing biomechanics.

- OWN captures the raw signal layer that makes this possible: ground reaction forces, pressure maps, stride kinematics, and terrain interaction at millisecond resolution across thousands of real-world users. Every step becomes a token. Every gait sequence becomes a sentence. Every user becomes a corpus.

- This is the path to a Movement Foundation Model: a pretrained representation of human locomotion that transfers across downstream tasks, from fall prediction to humanoid sim-to-real, the way GPT transfers across language tasks.

- No one else is generating this data at scale, in the wild, continuously.

Key Callouts

Tokenized Gait Primitives

- Stride, stance, swing, loading response encoded as discrete learned embeddings

Pretrained Transfer

- One base model, many downstream tasks: clinical gait analysis, robot locomotion, sports biomechanics

Scaling Law Advantage

- Performance improves predictably with more users, more steps, more terrain diversity

The sim to real gap limits physical AI

- Simulation Fidelity

Robots trained in sim produce conservative, unnatural movement

- Terrain Blindness

World models lack physics

grounding

- Data Scarcity

VLA architectures need multimodal data at scale

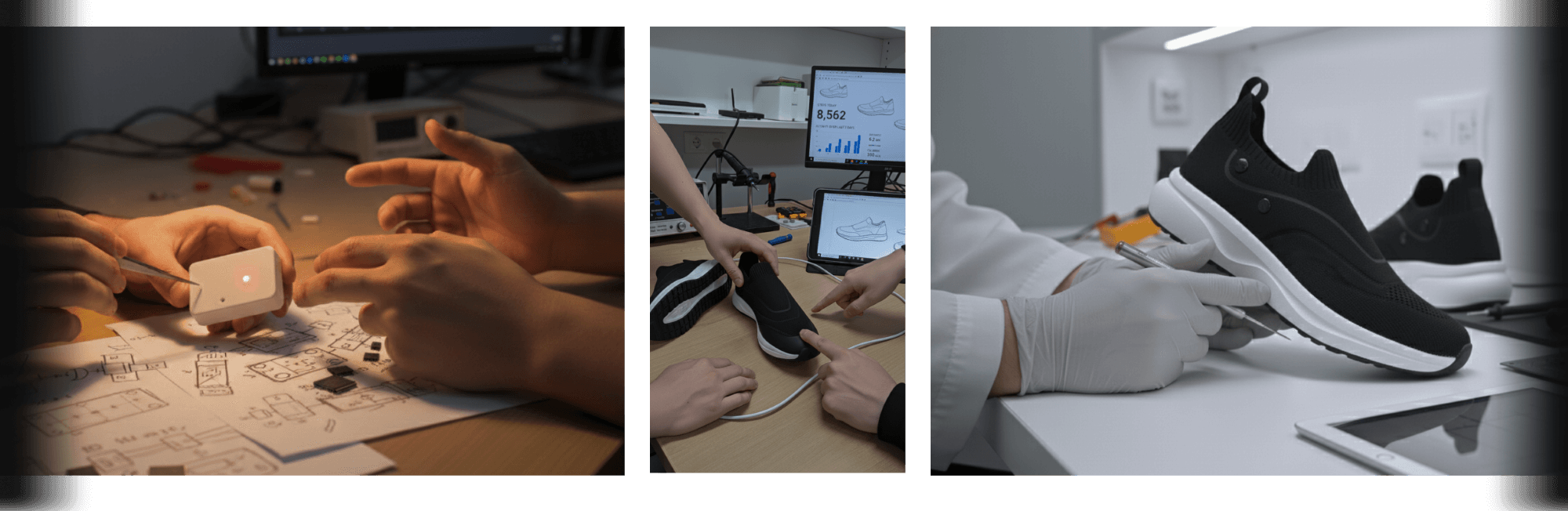

ground truth Pipeline

Capture

High fidelity insoles record force, pressure, inertia, and cardiovascular signals at 300Hz during natural locomotion

Process

Edge AI computes calibrated ground reaction forces, slip detection, and foot pose estimation on device

Annotate

App based labeling of terrain (gravel, ice, stairs), activity (commute, trail), events (near falls), and state (fatigue, stability)

Output

Multimodal datasets ready for training world models, locomotion controllers, and health predictors

three core products

OWN RISE Motion

Curated multimodal datasets for robotics training

- Population scale ecological data (not lab constrained)

- Formatted for major physics engines and RL frameworks

OWN RISE World

Terrain datasets for sim to real transfer

- Surface properties cameras can't capture (friction, compliance, stability)

- 100+ terrain types mapped with ground interaction data

- Physics priors for world models and synthetic data validation

OWN RISE Health

Foundation models for disease prediction

- "The Sixth Vital Sign" (gait predicts mortality)

- Pre symptomatic detection: neurodegeneration, cardiovascular, frailty

- Edge to cloud deployment options

Key research areas

Gait &

Balance

Quantify asymmetry, stability, and recovery for fall prevention and robot bipedality

Foot Pose & Terrain

Map ground interactions across 100+ surfaces, informing sim to real transfer

Cardiovascular Signals

Pair heart rate with motion for fatigue detection and load response

Edge

Cases

Annotated slips, perturbations, and adaptations from real world steps

Powering breakthroughs in humanoid robotics, clinical gait analysis, and synthetic data calibration.

applications

Sim to Real Transfer

Calibrate physics engines, enable zero-shot policy transfer

World Models

Physics priors that video only

models lack

Vision Language Action

Multimodal training data for

generalist robot policies

Clinical Biomarkers

Remote monitoring and digital

therapeutics endpoints

Every Step is the Ground Truth

OWN RISE Lab collaborates on dataset access, joint publications, and coresearch. Ideal for robotics, biomechanics, and health AI groups.